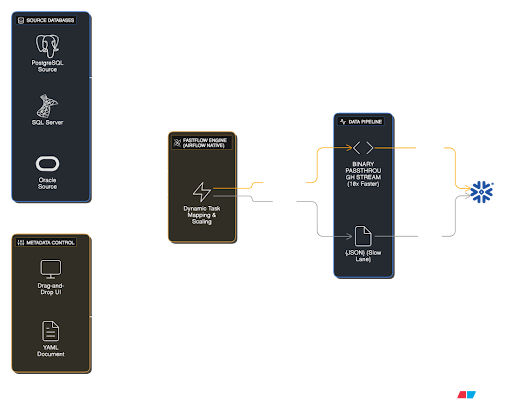

Fig 1.1: FastFlow Engine High-Level Architecture

Architectural Components

Metadata Control

As seen in the diagram (bottom left), workflows can be managed visually via Drag-and-Drop UI or through YAML files (GitOps compatible).

Engine & Scaling

The central engine leverages Airflow Native Dynamic Task Mapping. It automatically scales the number of workers based on data load.

Binary Passthrough (Fast Lane)

The "10x Faster" path in the diagram. Data flows directly to the target (Snowflake) as a binary stream, bypassing high-cost JSON serialization (Slow Lane).

Broad Source Support

Seamlessly ingest data from traditional databases like SQL Server, PostgreSQL, and Oracle using CDC or batch methods.

Airflow Native Experience

No need to log in to another tool. FastFlow sits inside your existing Airflow environment as a "Plugin".

-

Familiar Interface

With the "ETL Studio" tab added to the Airflow menu, your team feels right at home.

-

Global Configuration

Manage project folders and database connections from a central location.

-

No-Code Setup

Select Source and Target via UI without messing with DAG files.